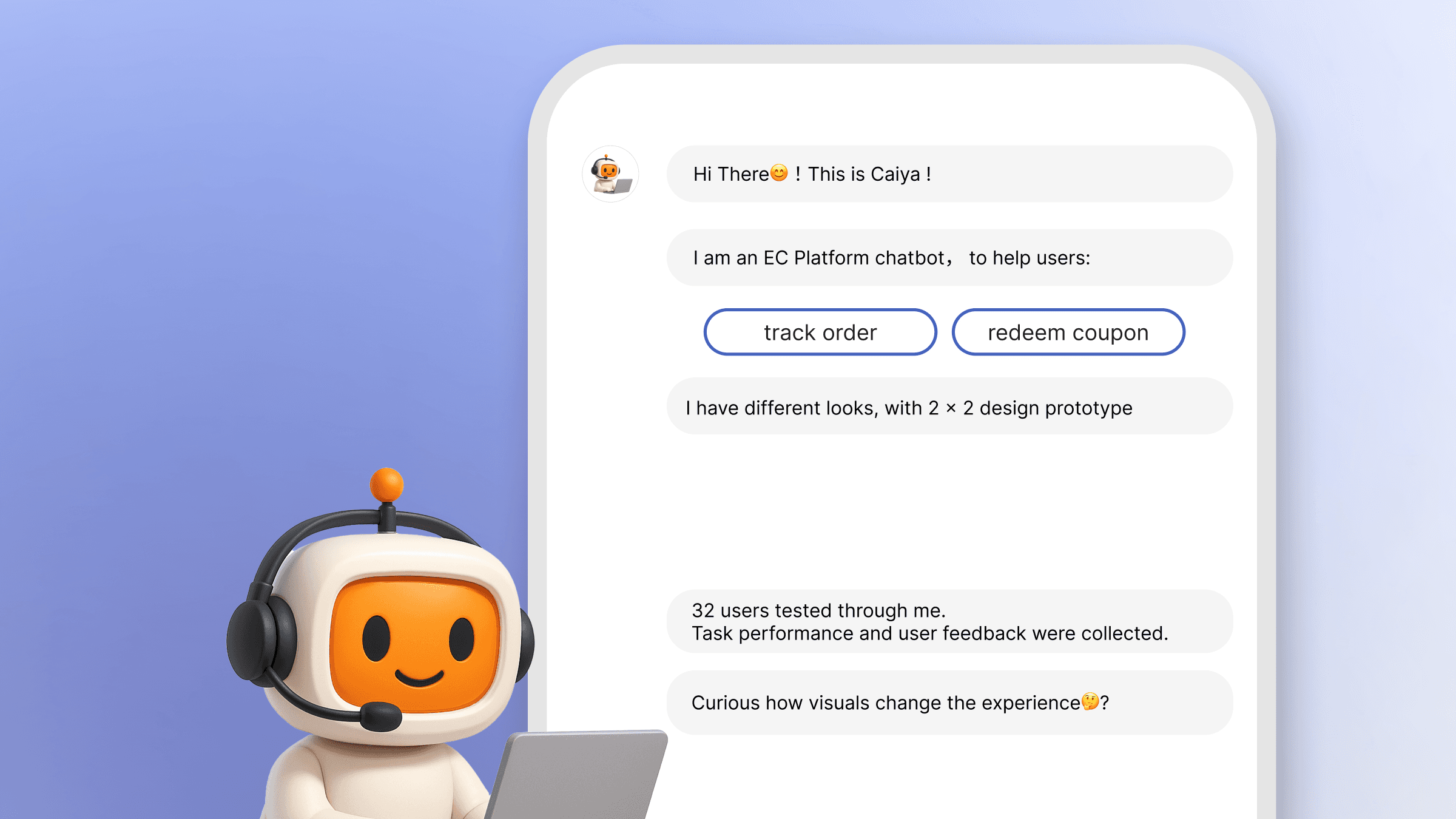

Current e-commerce chatbots often suffer from "conversational coldness" and high friction during complex tasks. This research shifts the focus from purely verbal logic to Visual Interaction Cues (VICs) and Avatar Embodiment.

The goal: Transform technical AI capabilities into predictable business value by examining how visual cues impacts user performance and emotional comfort during tracking and voucher redemption flows.

Precision-Led Inquiry: Defining the Scope of AI UX.

RQ1 (Functional): How do avatar embodiment and VICs affect task-oriented experience — specifically clarity and task efficiency — in e-commerce AI?

RQ2 (Emotional): How do these elements influence emotion-oriented metrics such as satisfaction, trust, and emotional comfort?

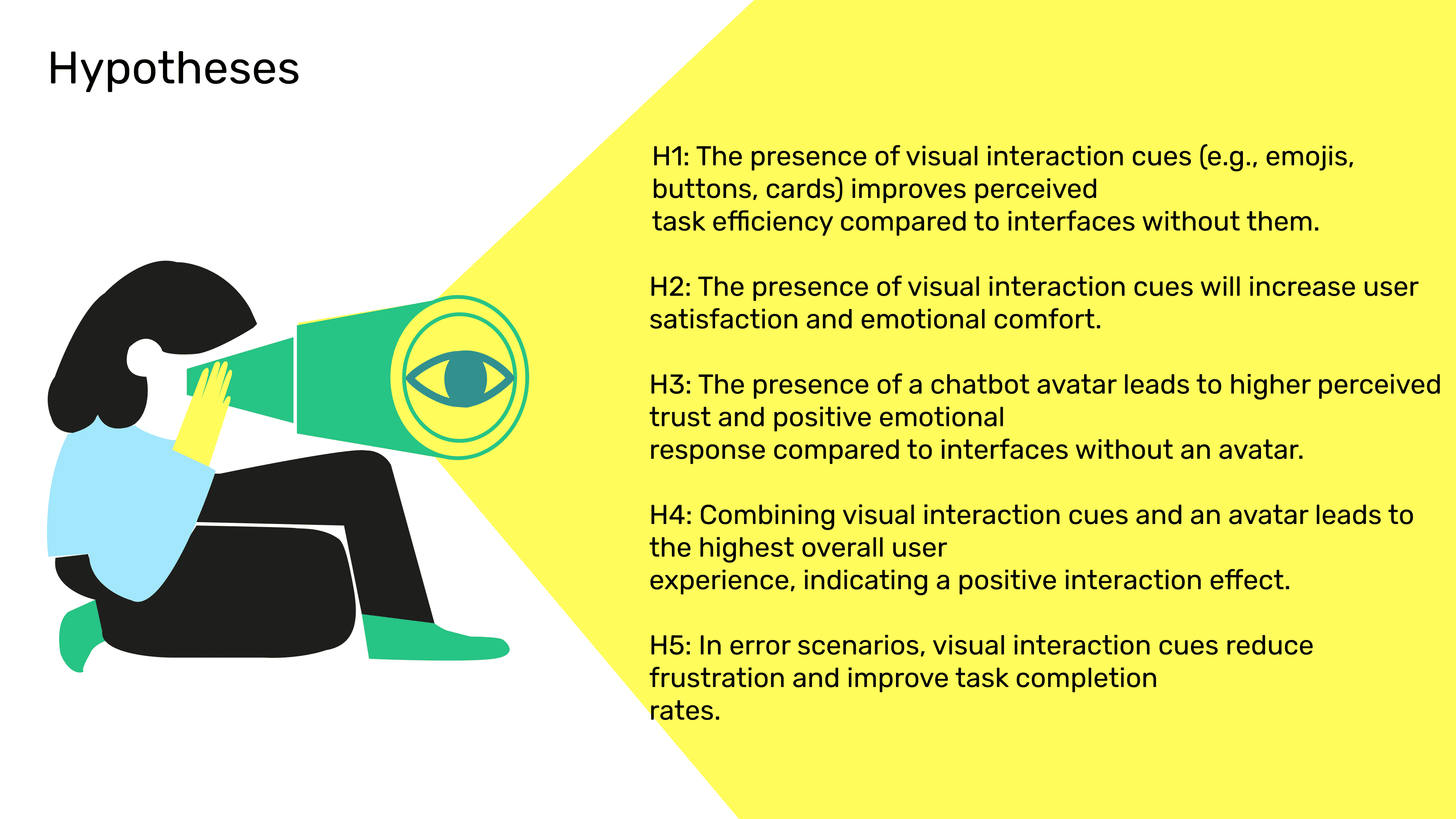

Formulated 5 hypotheses targeting both functional performance and emotional resonance.

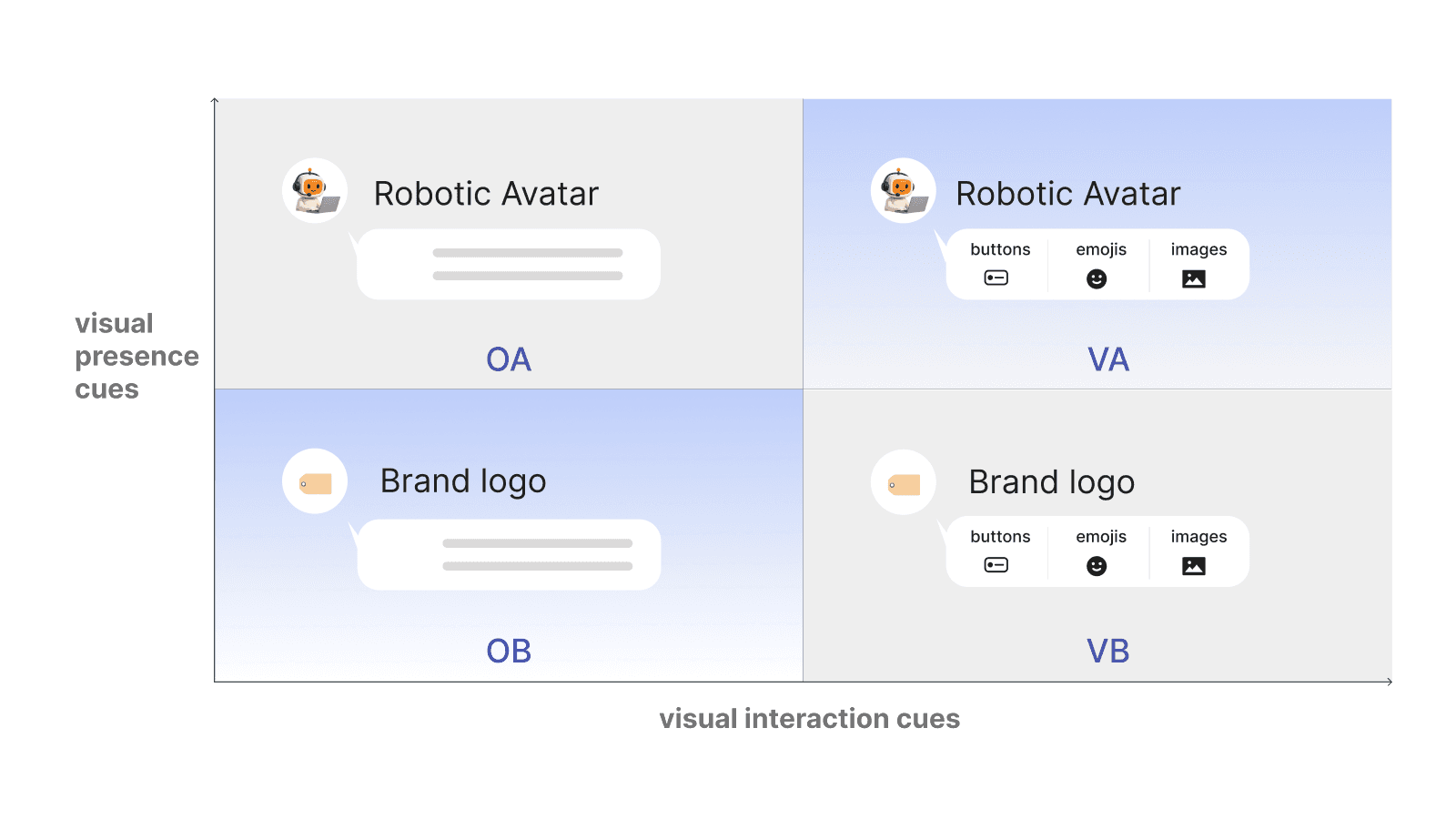

Experimental Framework: Conducted a rigorous 2×2 between-subjects experiment with 32 participants across four high-fidelity prototype variations (VA, VB, OA, OB).

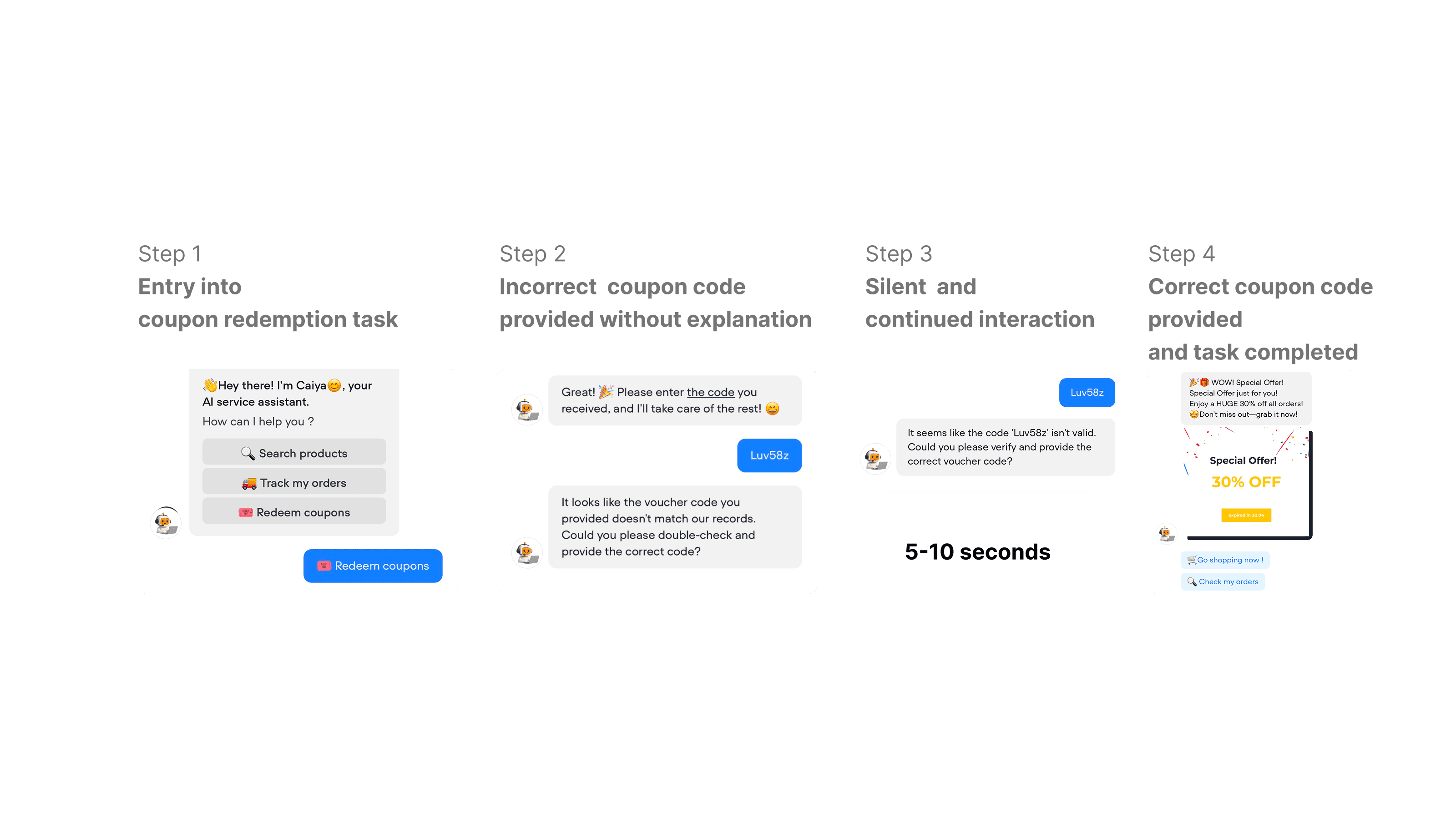

Wrong Path Design: To avoid "process theater," I embedded a simulated system failure (undisclosed voucher entry error). This allowed authentic observation of user frustration and recovery behavior in non-ideal scenarios.

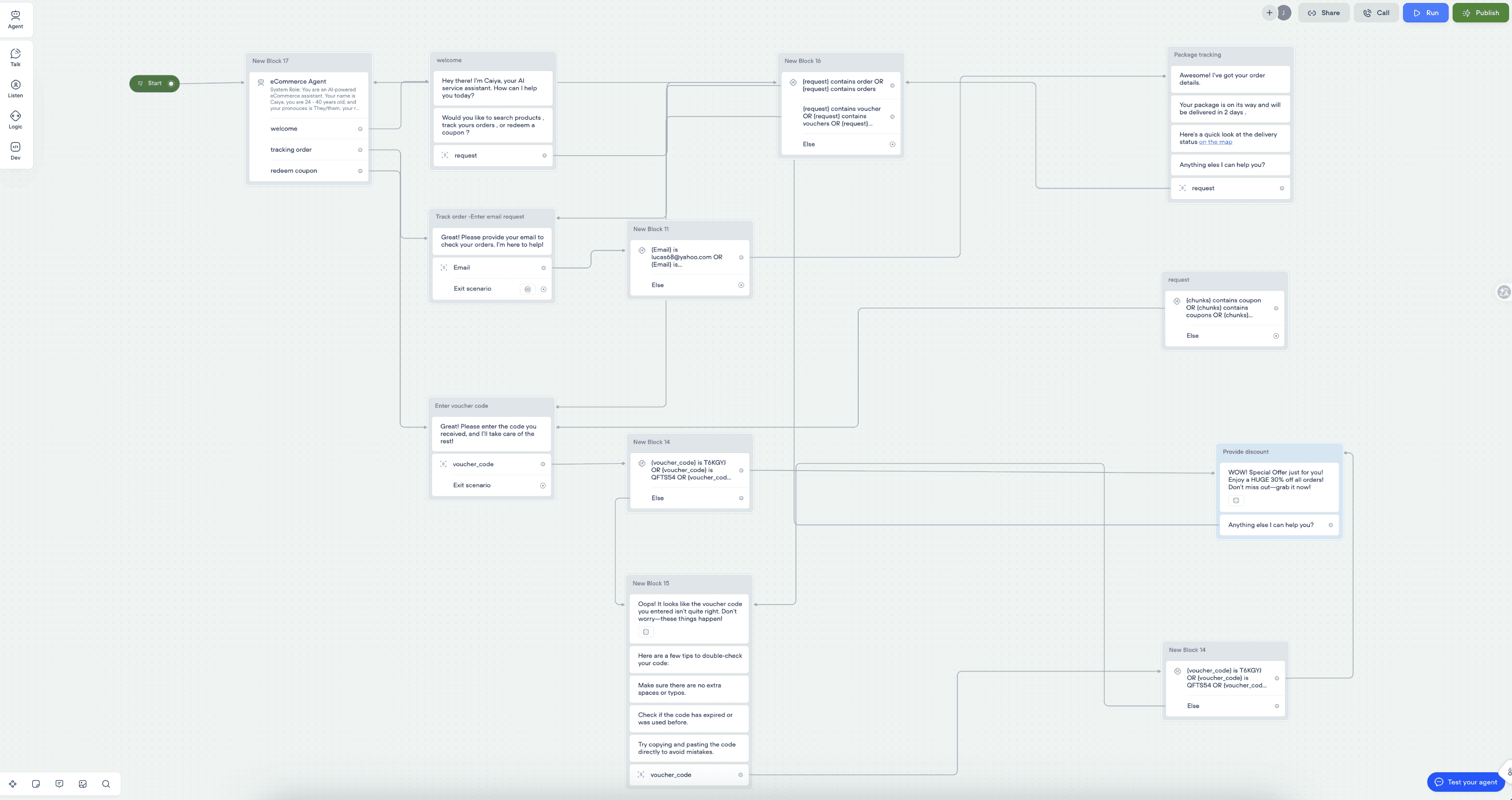

Technical Realization: Moving beyond static mockups, I architected a functional conversational system using Voiceflow integrated via API with GPT-3.5 Turbo.

- The CUQ: Utilized the Chatbot Usability Questionnaire developed by Ulster University

- High-Fidelity Behavioral Tracking: Task Duration and Error Rate

- Think Aloud: Conducted direct observation during the undisclosed "Wrong Path" scenario

Recruited 32 participants via random intercept surveys in naturalistic settings (university cafeteria and library).

- Advanced Trust Calibration: Moving toward 7-point measurement scales to detect nuanced shifts beyond the "ceiling effect."

- Adaptive Interaction Logic: Transitioning to adaptive AI interfaces that dynamically balance visual richness with verbal guidance.

- Inclusive System Scaling: Prioritizing platform-neutral UI and demographic parity.